For almost two decades, IES/NCSER has funded Brian Bottge and his teams at the University of Kentucky and University of Wisconsin-Madison to develop and test the efficacy of a teaching method called Enhanced Anchored Instruction (EAI), which helps low-achieving middle school students with math disabilities develop their problem-solving skills by solving meaningful problems related to a real-world problem. The research findings support the efficacy of EAI, especially for students with math disabilities. Most recently, Bottge and his team have been researching innovative forms of assessment that more adequately capture what students with disabilities know both conceptually and procedurally in solving math problems. With supplemental funding, IES/NCSER extended Dr. Bottge’s latest grant to test the use of oral assessment to measure student knowledge and compare that with the knowledge demonstrated on a pencil and paper test. The COVID-19 pandemic introduced added challenges to this work when schools closed and students shifted to online education.

Below we share a recent conversation with Dr. Bottge about the experience of conducting research during a pandemic and what he and his team were still able to learn about the value of oral assessment in mathematics for students with disabilities.

What changes did you observe in the intervention implementation by teachers due to the COVID-related shift to online learning?

The shift to online learning created changes in class size and structure. For 38 days (22 days in classroom, 16 days online through a virtual meeting platform), the middle school special education teacher first taught concepts through a widely used video-based anchored problem, the Kim’s Komet episode of the Jasper Project, in which characters compete in a “Grand Pentathlon.” The teacher then engaged the students in a hands-on application of the concepts by running a live Grand Pentathlon. In the Grand Pentathlon, students make their own cars, race them on a full-size ramp, time them at various release points on the ramp, and graph the information to estimate the speed of the cars. The purpose of both units was to help students develop their informal understanding of pre-algebraic concepts such as linear function, line of best fit, variables, rate of change (slope), reliability, and measurement error. Midway through the study, in-person instruction was suspended and moved online. Instead of working with groups of three to four students in the resource room throughout the day, the teacher provided online instruction to 14 students at one time and scheduled one-on-one sessions with students who needed extra help.

What challenges did you observe in the students interacting with the activities and their learning once they shifted to online learning?

All students had access to a computer at home and they were able to use the online platform without much confusion because they had used it in other classes. The screen share feature enabled students to interact with much of the curriculum by viewing the activities, listening to the teacher, and responding to questions, although they could not fully participate in the hands-on part of the lessons. Class attendance and student behavior were unexpectedly positive during the days when students were online. For example, one student had displayed frequent behavioral outbursts in school but became a positive and contributing member of the online class. The ability to mute mics in the platform gave the teacher the option of allowing only one student to talk at a time.

Were students still able to participate in the hands-on activities that are part of the intervention?

For the hands-on activities related to the Grand Pentathlon competition, the teacher taught online and a research staff member manipulated the cars, track, and electronic timers from campus. Students watched their computer screens waiting for their turn to time their cars over the length of the straightaway. The staff member handled each student’s cars and one by one released them from the height on the ramp as indicated by each student. After students had recorded the times, the teacher asked students to calculate and share the speeds of their cars for each time trial height.

Do you have any other observations about the impact of COVID-19 on your intervention implementation?

One of the most interesting observations was parent participation in the lessons. Several parents went beyond simply monitoring how their child was doing during the units to actively working out the problems. Some were surprised by the difficulty level of the math problems. One mother jokingly remarked: I thought the math they were going to do was as easy as 5 + 5 = 10. The next time my son might have to be the parent and I might have to be the student. You all make the kids think and I like that.

When COVID-19 shut down your participating schools, how were you able to adjust your data collection to continue with your research?

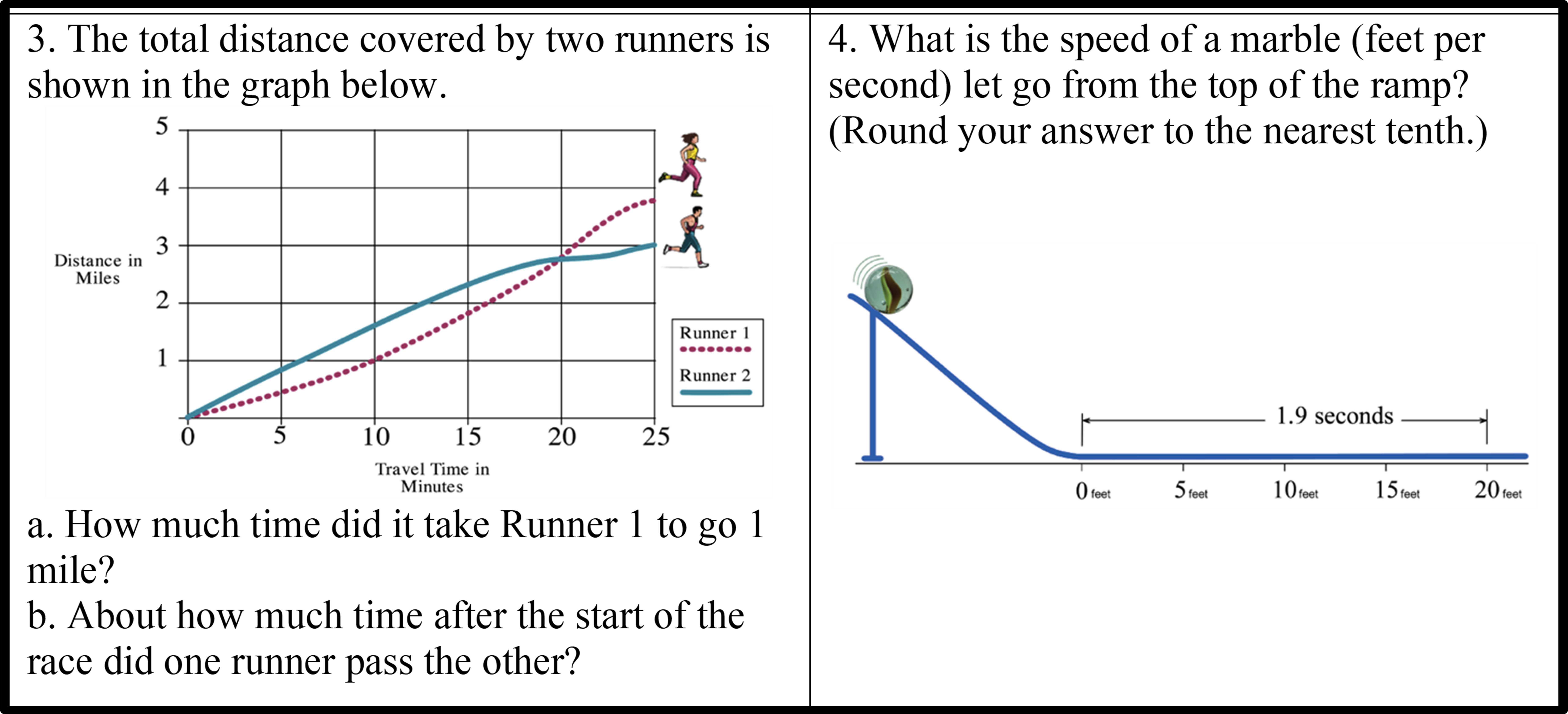

We used the same problem-solving test that we have administered in several previous studies (Figure 1 shows two of the items). On Day 1 of the study (pre-COVID), students took the math pretest in their resource rooms with pencil and paper. Due to COVID-19 school closures, we mailed the posttest and test administration instructions to student homes. On the scheduled testing day during an online class session, students removed the test from the envelope and followed directions for answering the test questions while we observed remotely. On Days 2 and 3 of the study (pre-COVID), an oral examiner (OE) pretested individual students in person. The OE asked the student questions, prompting the student to describe the overall problem, identify the information needed for solving the problem, indicate how the information related to their problem-solving plan, and provide an answer. Due to COVID-19, students took the oral posttests online. The teacher set up a breakout room in the platform where the OE conducted the oral assessments and a second member of the research team took notes.

Figure 1. Sample Items from the Problem-Solving Test

Figure 1. Sample Items from the Problem-Solving Test

During the testing sessions, the OE projected each item on the students’ computer screens. Then she asked the student to read the problem aloud and describe how to solve it. The OE used the same problem-solving prompts as was used on the pretests. For problems that involved graphs or charts, the OE used the editing tools to make notations on the screen as the students directed. One challenge is that oral testing online made it more difficult to monitor behavior and keep students on task. For example, sometimes students became distracted and talked to other people in their house.

What were the results of this study of oral assessment in mathematics for students with disabilities?

Our results suggest that allowing students to describe their understanding of problems in multiple ways yielded depth and detail to their answers. We learned from the oral assessment that most students knew how to transfer the data from the table to an approximate location on the graph; however, there was a lack of precision due to a weak understanding of decimals. For item 4 in Figure 1, the use of decimals confused students who did not have much exposure to decimals prior to or during the study. We also found that graphics that were meant to help students understand the text-based items were in some cases misleading. The representation in item 4 was different than the actual ramp and model car activity students experienced virtually. We have used this math test several times in our research and regrettably had no idea that elements of the graphics contributed to misunderstanding.

Unfortunately, our findings suggest that the changes made in response to COVID-19 may have depressed student understanding. Performances on two items (including item 4 in Figure 1) that assessed the main points of the intervention were disappointing compared to results from prior studies. The increase in class size from 3–4 to 14 after COVID and switching to online learning may have reduced the opportunity for repetition and practice. There were reduced opportunities for students to participate in the hands-on activities and participate in conversations about their thinking with other students.

We acknowledge the limitations of this small pilot study to compare knowledge of students when assessed in a pencil and paper format to an oral assessment. We are optimistic about the potential of oral assessments to reveal problem-solving insights of students with math disabilities. The information gained from oral assessment is of value if teachers use it to individualize their instruction. As we learned, oral assessment can also point to areas where graphics or other information are misleading. More research is needed to understand the value of oral assessment despite the increase in time it might add to data collection efforts for students with math disabilities. This experience highlights some of the positive experiences of students learning during COVID-19 virtually at home as well as some of the challenges and risks of reduced outcomes from these virtual learning experiences, especially for students with disabilities.

This blog was written by Sarah Brasiel, program officer for NCSER’s Science, Technology, Engineering, and Math program.